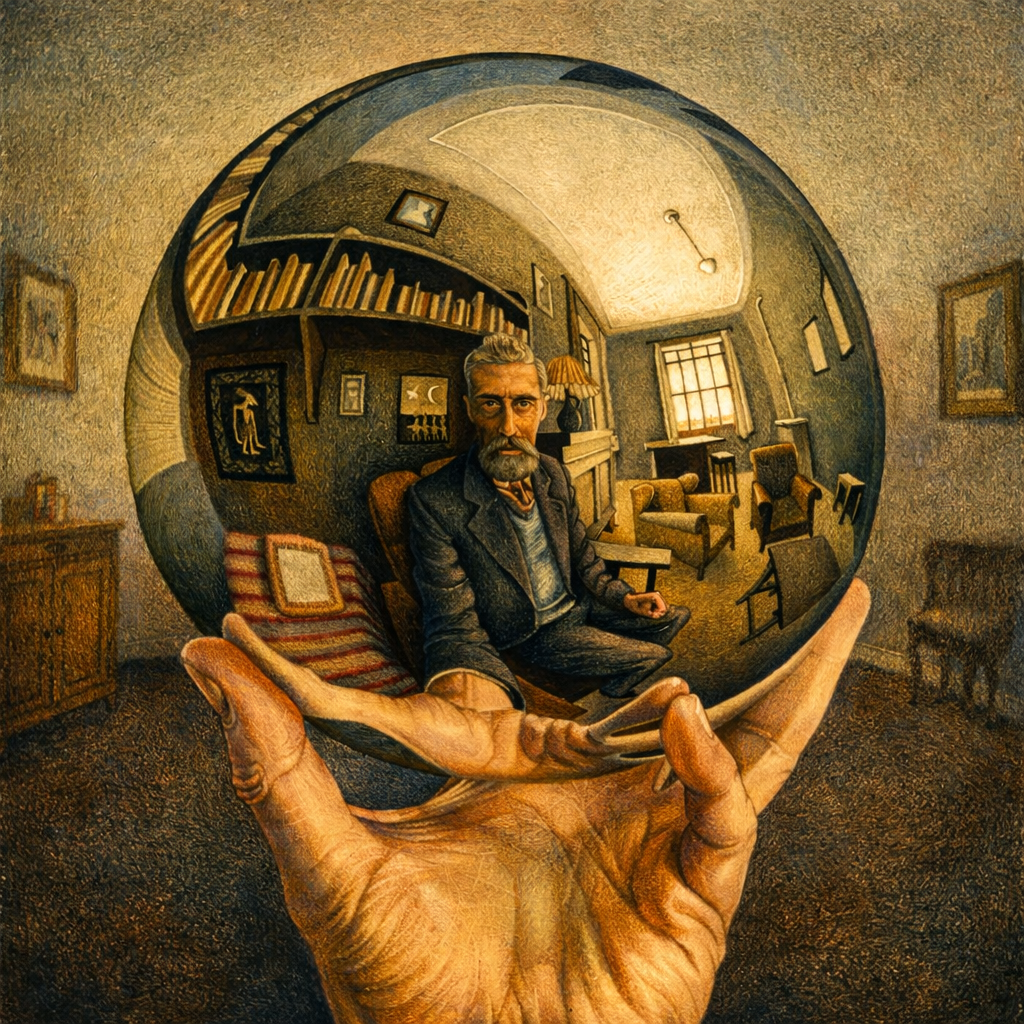

The Obvious is Not Enough

There’s a comforting myth we like to believe about our Deep Insight.

That once you see deeply enough, you see everything.

Isaac Newton gave us calculus and gravity. Albert Einstein reshaped our understanding of space and time. These weren’t incremental improvements, they were new lenses to see reality itself.

And yet, both of them missed things hiding inside their own ideas.

Newton could explain the orbit of a planet around the Sun, but not the full long-term consequences of many planets perturbing one another over immense timescales. Einstein’s equations naturally allowed a dynamic universe, but he introduced the cosmological constant to hold the universe still.

This isn’t a flaw in their intelligence.

It’s relatively easier to understand the first-order consequences of your ideas, but not always the second and third-order consequences. First-order consequences are direct. If this, then that. Gravity explains motion. Mass bends space-time. This is where things feel complete. But once you move beyond that, the nature of understanding changes. Second-order consequences are effects of effects. Third-order consequences are interactions between those effects. At this level, things stop being intuitive.

Einstein’s equations allowed black-holes, but he resisted the idea. Newton could describe motion, but long-term stability required tools that didn’t evolve yet. In both cases, the consequences extended beyond what was immediately visible.

This seems to happen often. We are naturally good at understanding what happens, but not as good at following what happens next, and even less at understanding what happens when multiple consequences interact with each other.

This shows up outside physics as well. When you build something, the first-order is that it works. The second-order is that people start using it and adapting to it. The third-order is that those behaviours interact and reshape the system itself.

Most problems don’t come from the first step. They come from not following the chain far enough.

There are frameworks that try to deal with this.

One way to look at it is through System Dynamics, introduced by Jay Forrester. Instead of thinking in straight lines, it suggests thinking in loops. Outcomes don’t just follow causes, they feed back into the system over time. Closely related to this is the idea of Feedback Loops. Some effects reinforce themselves and grow, others balance and resist change. Most systems contain both, but we tend to notice only one at a time.

Another useful idea comes from Chris Argyris in the form of Double-loop Learning. When something goes wrong, we tend to fix the action. But sometimes the issue is not the action, it’s the assumption behind it. Instead of asking “what did I do wrong?”, the better question becomes “what assumptions or mental model led me here, and could that be wrong?”.

You can think of it this way:

[ Error = Theory × Data × Initial Assumptions ]

We usually look for errors in the data or the execution. Rarely in the assumptions. But higher-order consequences often hide there, in what we take for granted while building the idea.

A simple way one can try to approach this is to deliberately think in layers. Start with what happens, then ask what changes because of it, and then look at how those changes might interact. You won’t get all the way, but you will get further than stopping at the first answer.

These ideas don’t eliminate the problem. They change how you approach it.

The goal is not to predict everything. That’s not possible. The goal is to not stop too early.

Don’t just think in lines. Think in loops. And occasionally, question the map or the lens you’re using to think in the first place.